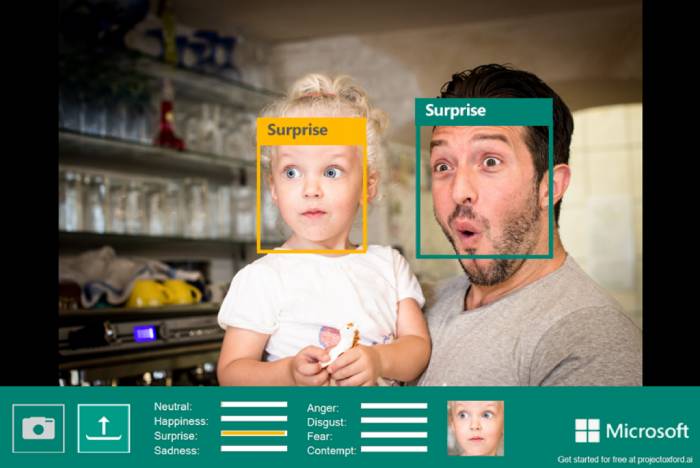

Microsoft is gradually removing the ability for the general public to access a number of AI-powered facial analysis tools, one of which claims to be able to tell a subject’s expression from videos and pictures.

Experts have condemned such “emotion recognition” tools. They claim that it is unscientific to link outward displays of emotion with deep-seated emotions and that not only do facial expressions that are supposed to be universal vary across many groups.

In 2019, Lisa Feldman Barrett, a psychology professor at Northeastern University who reviewed the topic of AI-powered emotion recognition, said to The Verge, “Companies can say whatever they want, but the data are clear.” “They can detect a scowl, but that’s not the same thing as detecting anger.”

The choice is a part of a more extensive overhaul of Microsoft’s AI ethics guidelines. In addition to more human oversight over how these technologies are used, the company’s new Responsible AI Standards (first presented in 2019) include an emphasis on accountability to learn who utilises its services.

Accordingly, Microsoft will limit access to some parts of its facial recognition services, known as Azure Face, while completely removing others. When requesting to use Azure Face for face recognition, for instance, users must provide the exact location and method of deployment for Microsoft’s systems. Some use cases with less risky potential will continue to be open-access, such as automatically blurring faces in photos and videos.

Microsoft is retiring Azure Face’s capacity to recognise “attributes such as gender, age, smile, facial hair, hair, and makeup,” in addition to shutting public access to its emotion identification tool.

Microsoft’s chief responsible AI officer, Natasha Crampton, announced the development in a blog post. “Experts inside and outside the company have highlighted the lack of scientific consensus on the definition of ‘emotions,’ the challenges in how inferences generalise across use cases, regions, and demographics, and the heightened privacy concerns around this type of capability,” she wrote.

According to Microsoft, it will stop providing these features to new clients as of today, June 21, and it will revoke access for current users on June 30, 2023.

Microsoft will still use these technologies in at least one of its own products, an app called Seeing AI that uses machine vision to describe the environment for people with visual impairments, even if it is retiring public access to them.

Tools like emotion recognition “can be valuable when used for a set of controlled accessibility scenarios,” according to Sarah Bird, principal group product manager for Azure AI at Microsoft. It’s unclear whether these tools will be incorporated into any other Microsoft goods.

Similar limitations are also being added by Microsoft to its Custom Neural Voice function, which enables users to create AI voices from recordings of real people (sometimes known as an audio deepfake).

However, Bird cautions that it “is also easy to imagine how it could be used to inappropriately impersonate speakers and deceive listeners.” The tool “has exciting potential in education, accessibility, and entertainment.” Future restrictions on access to the feature, according to Microsoft, will “managed customers and partners” and “ensure the active participation of the speaker when creating a synthetic voice.”

- Rugby: Scrumhalf Fumiaki Tanaka, who played in three World Cups, retires at the end of the season - April 24, 2024

- Asia has become the world’s most “disaster-prone” region - April 24, 2024

- Where in Pokémon Go is Wiglett located? - April 24, 2024